I’m actually surprised by the comments in here. This technology is incredibly disruptive to authors, if they are correct that their intellectual property has been misused by these companies to train LLMs, then they absolutely should have the right to prevent that.

You can both be pro AI and advancement, and still respect creators intellectual rights and the right to not have all content stolen by megacorporations and used by them to create profits while decimating entire industries.

Eventually the bad actors are going to lose a lot of money trying to litigate their theft of people’s art. It was always going to end up in the legal system. These apps are even programmed to scrub watermarks and signatures. It’s deliberate theft.

Yes, thank you for this comment.

Exactly this, this is the equivalent of me taking a movie, making a function, charge for it, and then be displeased when the creators demand an explanation about it.

It’s more like reading a book and then charging people to ask you questions about it.

AI training isn’t only for mega-corporations. We can already train our own open source models, so we should let people put up barriers that will keep out all but the ultra-wealthy.

It’s more like reading a book and then charging people to ask you questions about it.

No, it’s really nothing like reading at all. Your example requires a human element. This is just the consumption of data, not reading.

Humans are the ones making these models. It’s not entirely the same thing, but you should read this article by the EFF.

I don’t think that it is even remotely close to being the same thing. I’m sorry but we shouldn’t be affording companies the ability to profit off other people’s creations without their consent, regardless of how current copyright law works.

Acting as though a human writing a summary is the same thing as a vast network of computers processing data at a speed that is hundreds if not thousands times faster than a human is foolish. Perhaps it is also foolish to try and apply our current copyright laws (which already favour large corporations and not individual creators) to this slew of new technology, but just ignoring the fundamental difference between the two is no way of going about it. We need copyright reform, we need protections for creators, and we need to stop acting as though machine learning algorithms are remotely comparable to humans both in their capabilities, responsibilities and rights.

There is a perfectly reasonable way of doing this ethically, and that is using content that people have provided to the model of their own volition with their consent either volunteered or paid for, but not scraped from an epub, regardless of if you bought it or downloaded it from libgen.

There are already companies training machine learning models ethically in this manner, and if creators do not want their content used as training data, it should not be.

No, it’s more like checking out every book from the library, and spending 450 years training at the speed of light, being evaluated on how well you can exactly reproduce the next part of any snippet taken from any book.

One of the largest communities on Lemmy is !piracy@lemmy.dbzer0.com, so I’m not really surprised that there’s people that don’t care about copyright :)

On the other hand, if a human is allowed to write a summary of a book, why should an AI not be allowed to do the same thing? Are they going to sue cliffnotes too?

My main point is that if people don’t want their content used for training LLMs they should absolutely have the option to not have their content used to train LLMs.

Training databases should be ethically sourced from opt in programs, that some companies are already doing, such as Adobe.

My main point is that if people don’t want their content used for training LLMs they should absolutely have the option to not have their content used to train LLMs.

How can one prove that their content is being used to train the LLM though, rather than something that’s derivative of their content like reviews of it?

there is already lots of evidence that they have scraped copyrighted art and photographs for their datasets.

People keep taking issue with this articles use of “summarizing” and linking to wikipedia… Summaries of copyrighted work are obviously not illegal.

This article is oversimplified and does a crummy job of explaining the problem. Ars Technica does a much better job explaining.

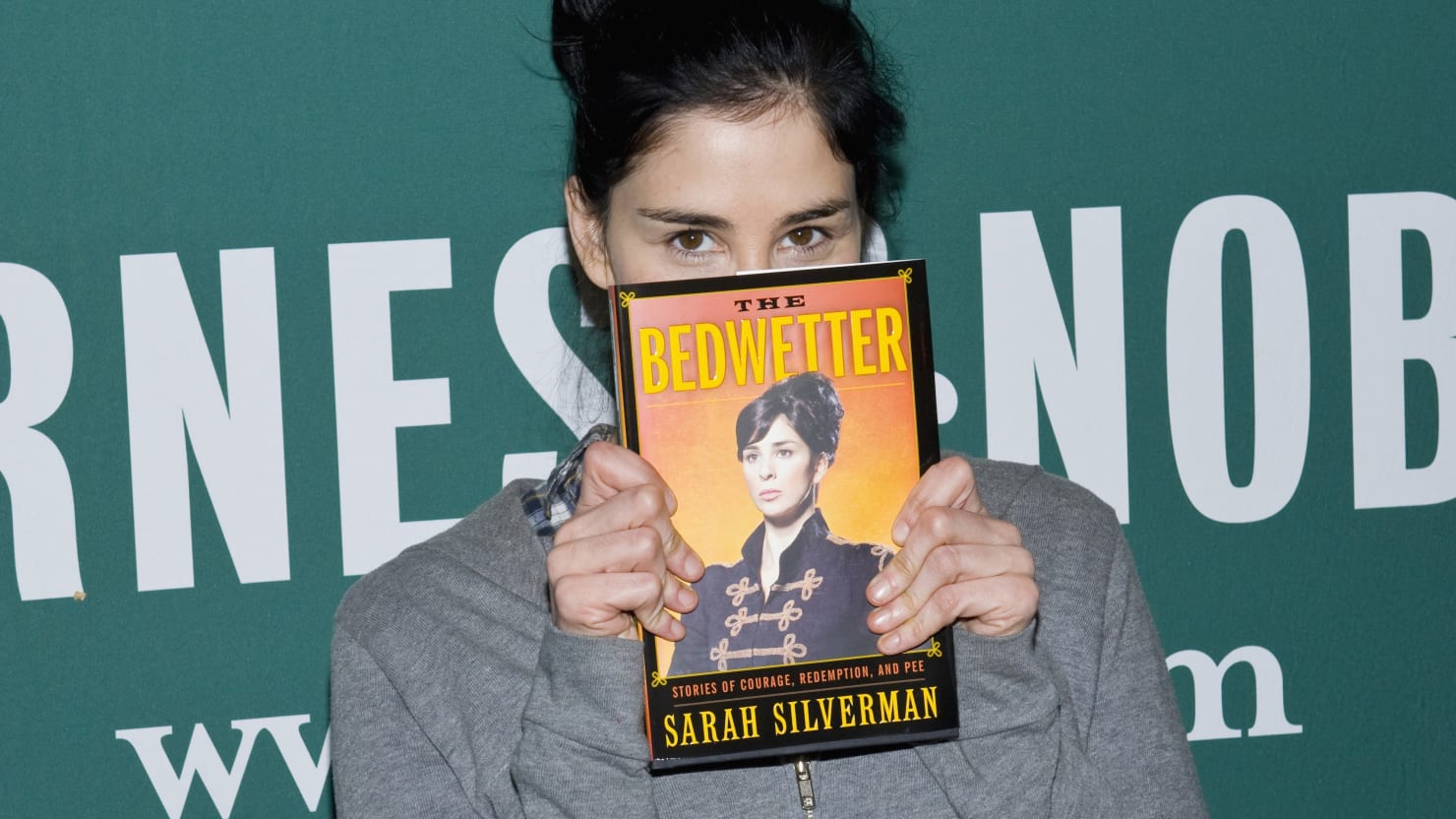

The fact that the ai can summarize these works in detail is proof that they were trained using copyrighted material without permission, (which is not fair use) Sarah Silverman is obviously not going to be hurt financially by this, but there are hundreds of thousands of authors who definitely will be affected. They have every right to sue.

Why does “fair use” even fall into it? I’m not familiar with their specific license, but the general definition of copyright is:

A copyright is a type of intellectual property that gives its owner the exclusive right to copy, distribute, adapt, display, and perform a creative work, usually for a limited time.

Nothing was copied, or distributed (in a form that anybody can consider “The Work”), or displayed, or performed. The only possible legal argument they have is adapting as a derivative work. And anybody who is familiar with how an LLM works knows that the form that results from reading in content is completely different from the source.

LLMs/LDMs are not taking in billions of books and putting them into a database. It is a very lossy process. Out of all of the billions of images trained from the Stable Diffusion database, the resulting model is 4 GBs. There is no universe where you can store billions of images into a mere 4 GBs. Stable Diffusion cannot and will not, pixel-by-pixel, reproduce a Van Gogh. It can make something that kind of looks like a Van Gogh, but styles are not copyrightable.

The same applies to an LLM like ChatGPT. It cannot reproduce entire books, or anywhere close to that. If you ask it to recreate Page 25 of Silverman’s book, it can’t do it. If it doesn’t even contain a minor portion of the original material, it can’t even be considered a derivative work.

They don’t have a case. They have a lot of publicity and noise, but they will lose to inevitability.

You make a lot of excellent points, but I think the main issue of contention is just using copyrighted work to train generative AI without the author’s permission regardless.

If they did ask permission, there would be no problem. But an author or artist should be given the choice if their work is going to be used to train an AI.

I think the main issue of contention is just using copyrighted work to train generative AI without the author’s permission regardless.

You must define that in legal terms. This is a lawsuit, after all. It’s not illegal to “just use” copyrighted work. The words “generative AI” are not in a federal or state bill anywhere in the US.

They can have an “issue of contention” all they want, but if they can’t prove anything legally, they have nothing.

A lot of these comments are missing a large point which is that, if the claim is true, the books are being pirated and then effectively used for a commercial application.

So the authors are losing money through this process and did not give their permission for their work to be used in a commercial way.

The decision of this case will be wildly important for the development of AI.

If they have access to some library with those books they are ok.

I doubt they just used pirated book to train their AI and than publishing it without having non pirated paper trail, it is not that hard.

But let’s see.

The only a problem here is how have they accessed the books, they don’t share copyrighted material to others. But I don’t think anyone should be held guilty for reading a book, so I hold the same stance for AI.

If you don’t want people to read your book, just don’t publish it.

Do you know what library genesis and z-library are?? They are literally libraries of pirated materials.

And yeah they can read the book, but shouldn’t be able to use its content in a commercial way (e.g. make money) off of its contents without the permission of the writer/copyright holder.

My pie in the sky hope is that copyright somehow becomes less stringent after all of this.

Don’t get me wrong I want protections for creators and support reasonable copyright (life of the author +25 years with the possibility of a 15 year extension) but letting a company lord over an IP for damn near a century isn’t ideal for anyone.

The major scenario that I at least hope holds true out of this is that the AI “creations” aren’t eligible for copyright themselves. If the powers that be allow all this AI created stuff copyright protection it’s going to be a gigantic mess.

Pure “prompt → image” with nothing in between I absolutely agree. It’s lazy and ripe for abuse by copyright trolls. That being said there’s a lot more in the world of AI assisted art than what most people are aware of.

Determining where the legal lines will be drawn is going to be a monumental task but I think there’s value in allowing authors to retain copyright on AI assisted works. I also can’t see the free open source models not going the way of restricting training data to public domain works like Adobe did with Firefly if that becomes a legal issue.

Lawyers goings to have lots of gigs these days

If they first don’t get fired for using ChatGPT and not double-checking the results.

That’s ironical

I was expecting lawsuits to fly over software source code laundering, but yeah, this makes sense too.

I guess she found a way to make money on a book nobody is buying after all.

They made a musical out of it so I’m sure it sold just fine. The pointless disparaging based on no facts isn’t very useful to this topic.

I tested by asking ChatGPT 3.5 specific questions about The Bedwetter, and it seems like it was not trained on the full text of the book. I asked it what is the first sentence, and then what is the second paragraph, and it gave plausible but incorrect answers. I asked it for the table of contents, and then if a specific chapter was in the book, and it said “my responses are generated based on pre-existing data and do not have real-time access to specific book content”. I asked who wrote the foreward, and who wrote the afterward. It said Patton Oswalt wrote the foreward and that there is no afterward. In reality, Sarah wrote the foreward and God wrote the afterward.

ChatGPT conversation

Table of contents and first chapter from Google Books.LLMs compress data, there’s no way ChatGPT could remember every detail of the book alongside all the other information it stores in its encodings. The issue isn’t whether the entire text of the book is contained within the encodings, it’s whether it was trained on the book in the first place.

‘Reading my book infringes on my copyright.’ say confused writers.

This is a strawman.

You cannot act as though feeding LLMs data is remotely comparable to reading.