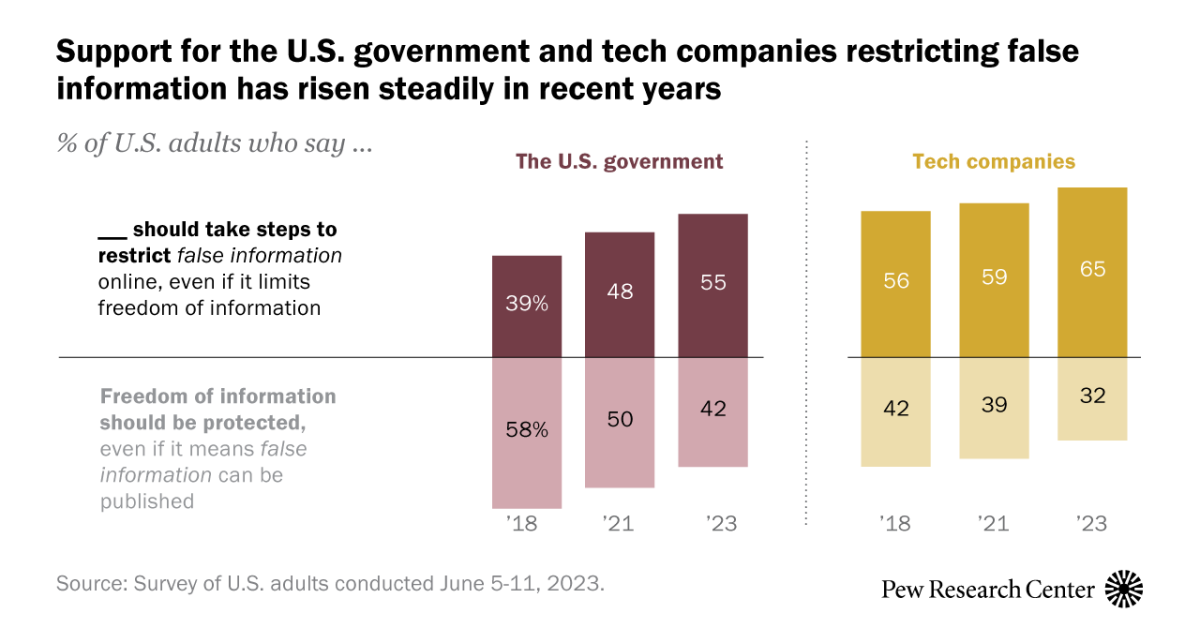

65% of Americans support tech companies moderating false information online and 55% support the U.S. government taking these steps. These shares have increased since 2018. Americans are even more supportive of tech companies (71%) and the U.S. government (60%) restricting extremely violent content online.

Y’all gonna regret this when Ron DeSantis gets put in charge of deciding which information is false enough to be deleted.

What a slippery slippery slope….

I personally like transparent enforcement of false information moderation. What I mean by that is something similar to beehaw where you have public mod logs. A quick check is enough to get a vibe of what is being filtered, and in Beehaw’s case they’re doing an amazing job.

Mod logs also allow for a clear record of what happened, useful in case a person does not agree with the action a moderator took.

In that case it doesn’t really matter if the moderators work directly for big tech, misuse would be very clearly visible and discontent people could raise awareness or just leave the platform.

65% of Americans support tech companies moderating false information online

aren’t those tech companies the one who kept boosting false information on the first place to get ad revenue? FB did it, YouTube did it, Twitter did it, Google did it.

How about breaking them up into smaller companies first?

I thought the labels on potential COVID or election disinformation were pretty good, until companies stopped doing so.

Why not do that again? Those who are gonna claim that it’s censorship, will always do so. But, what’s needed to be done is to prevent those who are not well informed to fall into antivax / far-right rabbit hole.

Also, force content creators / websites to prominently show who are funding / paying them.

I don’t think this is really about censorship. You can say and advertise whatever you want, but after this if it can be proven false you have to pay the price. All it does is make people double check their facts and figures before they go shooting off random falsehoods.

What worries me is who defines what the truth is? Reality itself became political decades ago, probably starting with the existence of global warming and now such basic foundational facts as who won an election. If the government can punish “falsehood”, what do you do if the GOP is in charge and they determine that “Biden won 2020” is such a falsehood?

If the FCC can regulate content on television, they can regulate content on the internet.

The only reason the FCC doesn’t is the Republican-dominated FCC when Ajit Pai was in charge argued that broadband is an “information service” and not a “telecommunications service” which is like the hair splittingest of splitting fucking hairs. It’s fucking both.

Anyway, once it was classified as “information service” it became something the FCC (claimed it) didn’t have authority to regulate in the same way, allowing them to gut net neutrality.

If they FCC changed the definition back to telecommunications, they wouldn’t be able to regulate foreign websites, but they can easily regulate US sites and regulate entities who want to do business in the US using an internet presence.

Removed by mod

The key is defining terms like “false” and “violent.”

Checks out. I wouldn’t want the US government doing it, but deplatforming bullshit is the correct approach. It takes more effort to reject a belief than to accept it and if the topic is unimportant to the person reading about it, then they’re more apt to fall victim to misinformation.

Although suspension of belief is possible (Hasson, Simmons, & Todorov, 2005; Schul, Mayo, & Burnstein, 2008), it seems to require a high degree of attention, considerable implausibility of the message, or high levels of distrust at the time the message is received. So, in most situations, the deck is stacked in favor of accepting information rather than rejecting it, provided there are no salient markers that call the speaker’s intention of cooperative conversation into question. Going beyond this default of acceptance requires additional motivation and cognitive resources: If the topic is not very important to you, or you have other things on your mind, misinformation will likely slip in.

Additionally, repeated exposure to a statement increases the likelihood that it will be accepted as true.

Repeated exposure to a statement is known to increase its acceptance as true (e.g., Begg, Anas, & Farinacci, 1992; Hasher, Goldstein, & Toppino, 1977). In a classic study of rumor transmission, Allport and Lepkin (1945) observed that the strongest predictor of belief in wartime rumors was simple repetition. Repetition effects may create a perceived social consensus even when no consensus exists. Festinger (1954) referred to social consensus as a “secondary reality test”: If many people believe a piece of information, there’s probably something to it. Because people are more frequently exposed to widely shared beliefs than to highly idiosyncratic ones, the familiarity of a belief is often a valid indicator of social consensus.

Even providing corrections next to misinformation leads to the misinformation spreading.

A common format for such campaigns is a “myth versus fact” approach that juxtaposes a given piece of false information with a pertinent fact. For example, the U.S. Centers for Disease Control and Prevention offer patient handouts that counter an erroneous health-related belief (e.g., “The side effects of flu vaccination are worse than the flu”) with relevant facts (e.g., “Side effects of flu vaccination are rare and mild”). When recipients are tested immediately after reading such hand-outs, they correctly distinguish between myths and facts, and report behavioral intentions that are consistent with the information provided (e.g., an intention to get vaccinated). However, a short delay is sufficient to reverse this effect: After a mere 30 minutes, readers of the handouts identify more “myths” as “facts” than do people who never received a hand-out to begin with (Schwarz et al., 2007). Moreover, people’s behavioral intentions are consistent with this confusion: They report fewer vaccination intentions than people who were not exposed to the handout.

The ideal solution is to cut off the flow of misinformation and reinforce the facts instead.

But how do you implement such a thing without horrible side effects?